As automation becomes more flexible and data-driven, manufacturers are increasingly looking for ways to simplify robot teaching while maintaining accuracy. At JAKA, we focus on developing collaborative automation solutions that balance ease of use with industrial reliability. In our daily projects, a cobot system integrated with visual perception has proven to be an effective approach for reducing setup time and improving adaptability. This guide explains how we teach waypoints using a vision guided robot, breaking the process into practical steps that align with real production requirements rather than theoretical workflows.

Understanding Waypoint Teaching in a Vision-Based Cobot System

Waypoint teaching defines how a robot understands where to move and how to interact with its environment. In a vision-based cobot system, visual feedback replaces rigid, fixed-point programming. We begin by allowing the robot to identify reference features through cameras instead of relying only on manually taught coordinates. This enables flexible waypoint generation even when parts shift slightly on the work surface. With a vision guided robot, waypoint accuracy is continuously refined through image data, which helps reduce manual correction and supports repeatable results across different batches. In our experience, this method is especially useful for applications such as picking, alignment, and inspection where positional variation is unavoidable.

Step-by-Step Waypoint Teaching with Vision Guidance

Our step-by-step process starts with visual calibration, ensuring that the robot’s coordinate system aligns with the camera’s perception space. After calibration, we define task objects through visual recognition rather than fixed mechanical references. The operator then guides the robot to sample waypoints while the vision system records spatial relationships. This approach allows a cobot system to adapt its motion path dynamically based on detected object positions. During operation, the vision guided robot continuously validates waypoint data, helping maintain consistency without frequent re-teaching. This workflow supports efficient deployment in environments where product models or layouts change regularly.

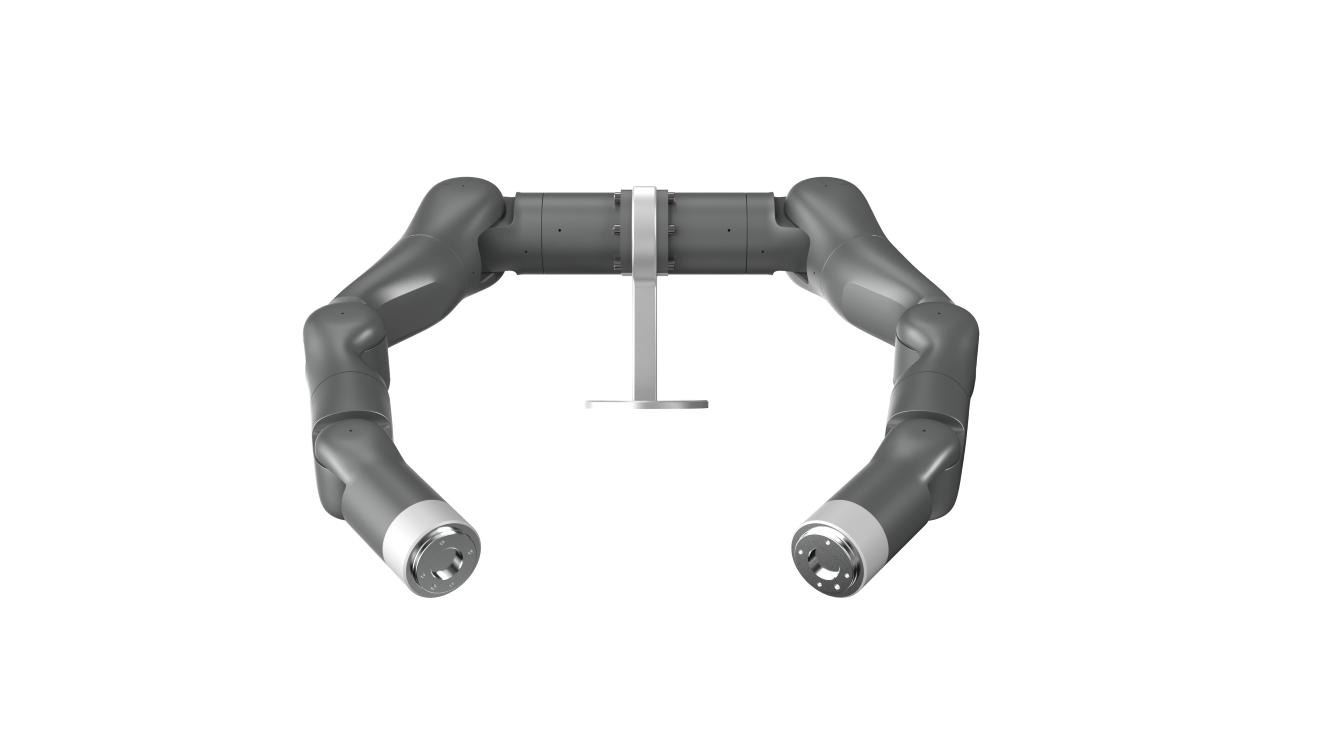

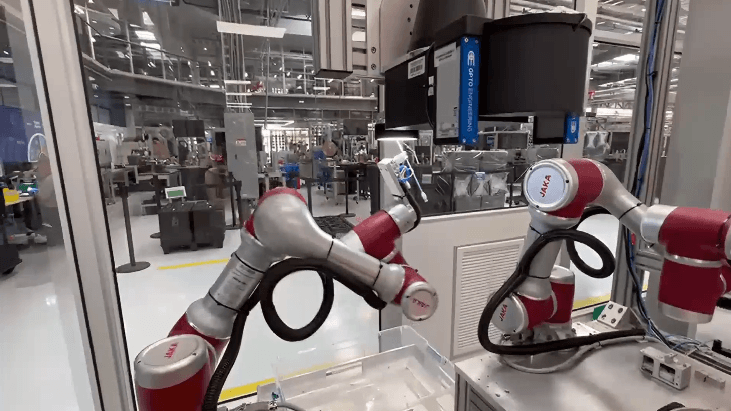

Applying Intelligent Visual Perception with JAKA A12L

In practical deployments, we often implement this process using the JAKA A12L intelligent visual perception robot. It combines collaborative robot functionality with integrated vision, enabling users to teach waypoints without complex external hardware. Features such as internal wiring, auto focus, and 2.5D vision help streamline visual setup and data acquisition. Through software integration, the system allows visual tasks and motion control to work together in a unified interface. Within a cobot system, this integration supports smoother waypoint teaching and reduces the learning curve for operators working with a vision guided robot in daily production scenarios.

Conclusion: Building Flexible Waypoint Teaching Workflows

Teaching waypoints with vision-based automation is not about replacing human expertise, but about making it easier to translate that expertise into stable robot behavior. By combining visual perception with structured teaching steps, we can deploy a vision guided robot that responds more effectively to real-world variation. At JAKA, we continue refining our cobot system solutions to support practical, adaptable automation. A structured waypoint teaching approach helps ensure consistency, scalability, and long-term usability across different manufacturing environments.